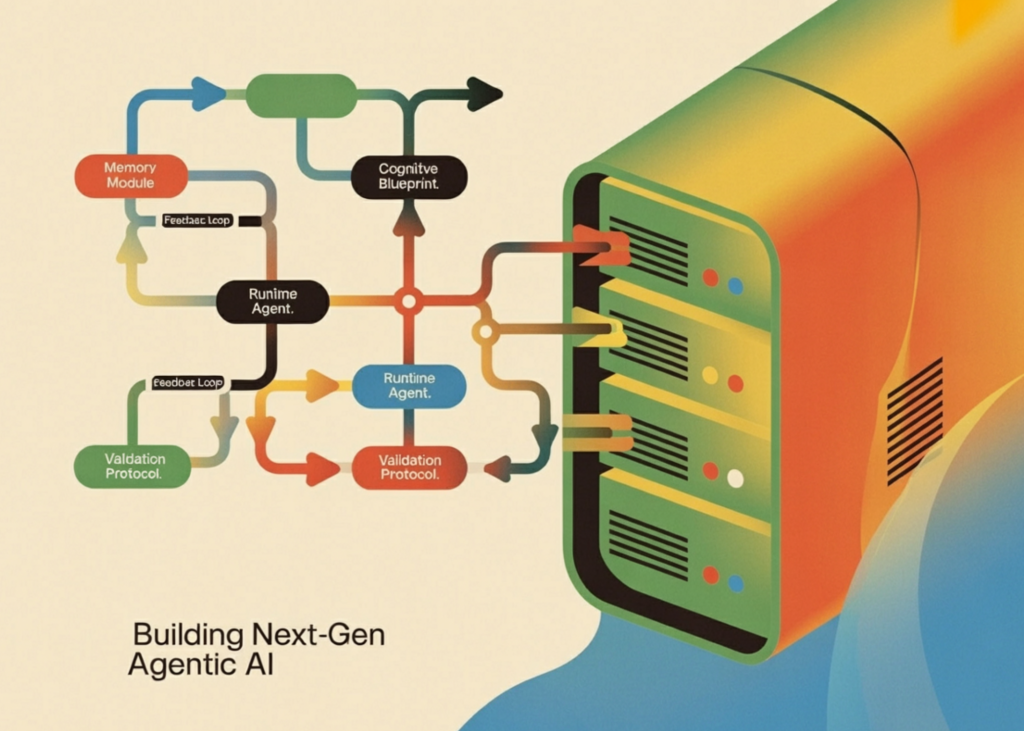

In diesem Tutorial erstellen wir ein vollständiges Framework für kognitive Blaupausen und Laufzeitagenten. Wir definieren strukturierte Blaupausen für Identität, Ziele, Planung, Gedächtnis, Validierung und Werkzeugzugriff und verwenden sie, um Agenten zu erstellen, die nicht nur reagieren, sondern ihre Ergebnisse auch planen, ausführen, validieren und systematisch verbessern. Im Rahmen des Tutorials zeigen wir, wie dieselbe Laufzeit-Engine durch Blueprint-Portabilität mehrere Agentenpersönlichkeiten und -verhalten unterstützen kann, wodurch das Gesamtdesign modular, erweiterbar und praktisch für fortgeschrittene Agenten-KI-Experimente wird.

import json, yaml, time, math, textwrap, datetime, getpass, os

from typing import Any, Callable, Dict, Record, Elective

from dataclasses import dataclass, area

from enum import Enum

from openai import OpenAI

from pydantic import BaseModel

from wealthy.console import Console

from wealthy.panel import Panel

from wealthy.desk import Desk

from wealthy.tree import Tree

attempt:

from google.colab import userdata

OPENAI_API_KEY = userdata.get('OPENAI_API_KEY')

besides Exception:

OPENAI_API_KEY = getpass.getpass("🔑 Enter your OpenAI API key: ")

os.environ("OPENAI_API_KEY") = OPENAI_API_KEY

consumer = OpenAI(api_key=OPENAI_API_KEY)

console = Console()

class PlanningStrategy(str, Enum):

SEQUENTIAL = "sequential"

HIERARCHICAL = "hierarchical"

REACTIVE = "reactive"

class MemoryType(str, Enum):

SHORT_TERM = "short_term"

EPISODIC = "episodic"

PERSISTENT = "persistent"

class BlueprintIdentity(BaseModel):

identify: str

model: str = "1.0.0"

description: str

writer: str = "unknown"

class BlueprintMemory(BaseModel):

sort: MemoryType = MemoryType.SHORT_TERM

window_size: int = 10

summarize_after: int = 20

class BlueprintPlanning(BaseModel):

technique: PlanningStrategy = PlanningStrategy.SEQUENTIAL

max_steps: int = 8

max_retries: int = 2

think_before_acting: bool = True

class BlueprintValidation(BaseModel):

require_reasoning: bool = True

min_response_length: int = 10

forbidden_phrases: Record(str) = ()

class CognitiveBlueprint(BaseModel):

identification: BlueprintIdentity

objectives: Record(str)

constraints: Record(str) = ()

instruments: Record(str) = ()

reminiscence: BlueprintMemory = BlueprintMemory()

planning: BlueprintPlanning = BlueprintPlanning()

validation: BlueprintValidation = BlueprintValidation()

system_prompt_extra: str = ""

def load_blueprint_from_yaml(yaml_str: str) -> CognitiveBlueprint:

return CognitiveBlueprint(**yaml.safe_load(yaml_str))

RESEARCH_AGENT_YAML = """

identification:

identify: ResearchBot

model: 1.2.0

description: Solutions analysis questions utilizing calculation and reasoning

writer: Auton Framework Demo

objectives:

- Reply consumer questions precisely utilizing accessible instruments

- Present step-by-step reasoning for all solutions

- Cite the tactic used for every calculation

constraints:

- By no means fabricate numbers or statistics

- All the time validate mathematical outcomes earlier than reporting

- Don't reply questions outdoors your software capabilities

instruments:

- calculator

- unit_converter

- date_calculator

- search_wikipedia_stub

reminiscence:

sort: episodic

window_size: 12

summarize_after: 30

planning:

technique: sequential

max_steps: 6

max_retries: 2

think_before_acting: true

validation:

require_reasoning: true

min_response_length: 20

forbidden_phrases:

- "I do not know"

- "I can not decide"

"""

DATA_ANALYST_YAML = """

identification:

identify: DataAnalystBot

model: 2.0.0

description: Performs statistical evaluation and information summarization

writer: Auton Framework Demo

objectives:

- Compute descriptive statistics for given information

- Determine developments and anomalies

- Current findings clearly with numbers

constraints:

- Solely work with numerical information

- All the time report uncertainty when pattern measurement is small (< 5 objects)

instruments:

- calculator

- statistics_engine

- list_sorter

reminiscence:

sort: short_term

window_size: 6

planning:

technique: hierarchical

max_steps: 10

max_retries: 3

think_before_acting: true

validation:

require_reasoning: true

min_response_length: 30

forbidden_phrases: ()

"""

Wir richten die Kernumgebung ein und definieren den kognitiven Bauplan, der das Denken und Verhalten eines Agenten strukturiert. Wir erstellen stark typisierte Modelle für Identität, Speicherkonfiguration, Planungsstrategie und Validierungsregeln mithilfe von Pydantic und Enumerationen. Wir definieren außerdem zwei YAML-basierte Blaupausen, die es uns ermöglichen, verschiedene Agentenpersönlichkeiten und -funktionen zu konfigurieren, ohne das zugrunde liegende Laufzeitsystem zu ändern.

@dataclass

class ToolSpec:

identify: str

description: str

parameters: Dict(str, str)

perform: Callable

returns: str

class ToolRegistry:

def __init__(self):

self._tools: Dict(str, ToolSpec) = {}

def register(self, identify: str, description: str,

parameters: Dict(str, str), returns: str):

def decorator(fn: Callable) -> Callable:

self._tools(identify) = ToolSpec(identify, description, parameters, fn, returns)

return fn

return decorator

def get(self, identify: str) -> Elective(ToolSpec):

return self._tools.get(identify)

def name(self, identify: str, **kwargs) -> Any:

spec = self._tools.get(identify)

if not spec:

increase ValueError(f"Instrument '{identify}' not present in registry")

return spec.perform(**kwargs)

def get_tool_descriptions(self, allowed: Record(str)) -> str:

strains = ()

for identify in allowed:

spec = self._tools.get(identify)

if spec:

params = ", ".be a part of(f"{ok}: {v}" for ok, v in spec.parameters.objects())

strains.append(

f"• {spec.identify}({params})n"

f" → {spec.description}n"

f" Returns: {spec.returns}"

)

return "n".be a part of(strains)

def list_tools(self) -> Record(str):

return checklist(self._tools.keys())

registry = ToolRegistry()

@registry.register(

identify="calculator",

description="Evaluates a secure mathematical expression",

parameters={"expression": "A math expression string, e.g. '2 ** 10 + 5 * 3'"},

returns="Numeric end result as float"

)

def calculator(expression: str) -> str:

attempt:

allowed = {ok: v for ok, v in math.__dict__.objects() if not ok.startswith("_")}

allowed.replace({"abs": abs, "spherical": spherical, "pow": pow})

return str(eval(expression, {"__builtins__": {}}, allowed))

besides Exception as e:

return f"Error: {e}"

@registry.register(

identify="unit_converter",

description="Converts between frequent models of measurement",

parameters={

"worth": "Numeric worth to transform",

"from_unit": "Supply unit (km, miles, kg, lbs, celsius, fahrenheit, liters, gallons, meters, ft)",

"to_unit": "Goal unit"

},

returns="Transformed worth as string with models"

)

def unit_converter(worth: float, from_unit: str, to_unit: str) -> str:

conversions = {

("km", "miles"): lambda x: x * 0.621371,

("miles", "km"): lambda x: x * 1.60934,

("kg", "lbs"): lambda x: x * 2.20462,

("lbs", "kg"): lambda x: x / 2.20462,

("celsius", "fahrenheit"): lambda x: x * 9/5 + 32,

("fahrenheit", "celsius"): lambda x: (x - 32) * 5/9,

("liters", "gallons"): lambda x: x * 0.264172,

("gallons", "liters"): lambda x: x * 3.78541,

("meters", "ft"): lambda x: x * 3.28084,

("ft", "meters"): lambda x: x / 3.28084,

}

key = (from_unit.decrease(), to_unit.decrease())

if key in conversions:

return f"{conversions(key)(float(worth)):.4f} {to_unit}"

return f"Conversion from {from_unit} to {to_unit} not supported"

@registry.register(

identify="date_calculator",

description="Calculates days between two dates, or provides/subtracts days from a date",

parameters={

"operation": "'days_between' or 'add_days'",

"date1": "Date string in YYYY-MM-DD format",

"date2": "Second date for days_between (YYYY-MM-DD), or variety of days for add_days"

},

returns="Outcome as string"

)

def date_calculator(operation: str, date1: str, date2: str) -> str:

attempt:

d1 = datetime.datetime.strptime(date1, "%Y-%m-%d")

if operation == "days_between":

d2 = datetime.datetime.strptime(date2, "%Y-%m-%d")

return f"{abs((d2 - d1).days)} days between {date1} and {date2}"

elif operation == "add_days":

end result = d1 + datetime.timedelta(days=int(date2))

return f"{end result.strftime('%Y-%m-%d')} (added {date2} days to {date1})"

return f"Unknown operation: {operation}"

besides Exception as e:

return f"Error: {e}"

@registry.register(

identify="search_wikipedia_stub",

description="Returns a stub abstract for well-known subjects (demo — no dwell web)",

parameters={"subject": "Subject to lookup"},

returns="Brief textual content abstract"

)

def search_wikipedia_stub(subject: str) -> str:

stubs = {

"openai": "OpenAI is an AI analysis firm based in 2015. It created GPT-4 and the ChatGPT product.",

}

for key, val in stubs.objects():

if key in subject.decrease():

return val

return f"No stub discovered for '{subject}'. In manufacturing, this may question Wikipedia's API."Wir implementieren die Instrument-Registrierung, die es Agenten ermöglicht, externe Funktionen dynamisch zu erkennen und zu nutzen. Wir entwerfen ein strukturiertes System, in dem Werkzeuge mit Metadaten registriert werden, einschließlich Parametern, Beschreibungen und Rückgabewerten. Wir implementieren außerdem mehrere praktische Instruments, wie einen Taschenrechner, einen Einheitenumrechner, einen Datumsrechner und einen Wikipedia-Such-Stub, den die Agenten während der Ausführung aufrufen können.

@registry.register(

identify="statistics_engine",

description="Computes descriptive statistics on an inventory of numbers",

parameters={"numbers": "Comma-separated checklist of numbers, e.g. '4,8,15,16,23,42'"},

returns="JSON with imply, median, std_dev, min, max, rely"

)

def statistics_engine(numbers: str) -> str:

attempt:

nums = (float(x.strip()) for x in numbers.cut up(","))

n = len(nums)

imply = sum(nums) / n

sorted_nums = sorted(nums)

mid = n // 2

median = sorted_nums(mid) if n % 2 else (sorted_nums(mid-1) + sorted_nums(mid)) / 2

std_dev = math.sqrt(sum((x - imply) ** 2 for x in nums) / n)

return json.dumps({

"rely": n, "imply": spherical(imply, 4), "median": spherical(median, 4),

"std_dev": spherical(std_dev, 4), "min": min(nums),

"max": max(nums), "vary": max(nums) - min(nums)

}, indent=2)

besides Exception as e:

return f"Error: {e}"

@registry.register(

identify="list_sorter",

description="Types a comma-separated checklist of numbers",

parameters={"numbers": "Comma-separated numbers", "order": "'asc' or 'desc'"},

returns="Sorted comma-separated checklist"

)

def list_sorter(numbers: str, order: str = "asc") -> str:

nums = (float(x.strip()) for x in numbers.cut up(","))

nums.type(reverse=(order == "desc"))

return ", ".be a part of(str(n) for n in nums)

@dataclass

class MemoryEntry:

function: str

content material: str

timestamp: float = area(default_factory=time.time)

metadata: Dict = area(default_factory=dict)

class MemoryManager:

def __init__(self, config: BlueprintMemory, llm_client: OpenAI):

self.config = config

self.consumer = llm_client

self._history: Record(MemoryEntry) = ()

self._summary: str = ""

def add(self, function: str, content material: str, metadata: Dict = None):

self._history.append(MemoryEntry(function=function, content material=content material, metadata=metadata or {}))

if (self.config.sort == MemoryType.EPISODIC and

len(self._history) > self.config.summarize_after):

self._compress_memory()

def _compress_memory(self):

to_compress = self._history(:-self.config.window_size)

self._history = self._history(-self.config.window_size:)

textual content = "n".be a part of(f"{e.function}: {e.content material(:200)}" for e in to_compress)

attempt:

resp = self.consumer.chat.completions.create(

mannequin="gpt-4o-mini",

messages=({"function": "consumer", "content material":

f"Summarize this dialog historical past in 3 sentences:n{textual content}"}),

max_tokens=150

)

self._summary += " " + resp.decisions(0).message.content material.strip()

besides Exception:

self._summary += f" (compressed {len(to_compress)} messages)"

def get_messages(self, system_prompt: str) -> Record(Dict):

messages = ({"function": "system", "content material": system_prompt})

if self._summary:

messages.append({"function": "system",

"content material": f"(Reminiscence Abstract): {self._summary.strip()}"})

for entry in self._history(-self.config.window_size:):

messages.append({

"function": entry.function if entry.function != "software" else "assistant",

"content material": entry.content material

})

return messages

def clear(self):

self._history = ()

self._summary = ""

@property

def message_count(self) -> int:

return len(self._history)Wir erweitern das Instrument-Ökosystem und führen die Speicherverwaltungsschicht ein, die den Gesprächsverlauf speichert und bei Bedarf komprimiert. Wir implementieren statistische Instruments und Sortierprogramme, die es dem Datenanalyseagenten ermöglichen, strukturierte numerische Operationen durchzuführen. Gleichzeitig entwerfen wir ein Speichersystem, das Interaktionen verfolgt, lange Historien zusammenfasst und dem Sprachmodell kontextbezogene Nachrichten liefert.

@dataclass

class PlanStep:

step_id: int

description: str

software: Elective(str)

tool_args: Dict(str, Any)

reasoning: str

@dataclass

class Plan:

job: str

steps: Record(PlanStep)

technique: PlanningStrategy

class Planner:

def __init__(self, blueprint: CognitiveBlueprint,

registry: ToolRegistry, llm_client: OpenAI):

self.blueprint = blueprint

self.registry = registry

self.consumer = llm_client

def _build_planner_prompt(self) -> str:

bp = self.blueprint

return textwrap.dedent(f"""

You might be {bp.identification.identify}, model {bp.identification.model}.

{bp.identification.description}

## Your Objectives:

{chr(10).be a part of(f' - {g}' for g in bp.objectives)}

## Your Constraints:

{chr(10).be a part of(f' - {c}' for c in bp.constraints)}

## Accessible Instruments:

{self.registry.get_tool_descriptions(bp.instruments)}

## Planning Technique: {bp.planning.technique}

## Max Steps: {bp.planning.max_steps}

Given a consumer job, produce a JSON execution plan with this precise construction:

{{

"steps": (

{{

"step_id": 1,

"description": "What this step does",

"software": "tool_name or null if no software wanted",

"tool_args": {{"arg1": "value1"}},

"reasoning": "Why this step is required"

}}

)

}}

Guidelines:

- Solely use instruments listed above

- Set software to null for pure reasoning steps

- Maintain steps <= {bp.planning.max_steps}

- Return ONLY legitimate JSON, no markdown fences

{bp.system_prompt_extra}

""").strip()

def plan(self, job: str, reminiscence: MemoryManager) -> Plan:

system_prompt = self._build_planner_prompt()

messages = reminiscence.get_messages(system_prompt)

messages.append({"function": "consumer", "content material":

f"Create a plan to finish this job: {job}"})

resp = self.consumer.chat.completions.create(

mannequin="gpt-4o-mini", messages=messages,

max_tokens=1200, temperature=0.2

)

uncooked = resp.decisions(0).message.content material.strip()

uncooked = uncooked.change("```json", "").change("```", "").strip()

information = json.hundreds(uncooked)

steps = (

PlanStep(

step_id=s("step_id"), description=s("description"),

software=s.get("software"), tool_args=s.get("tool_args", {}),

reasoning=s.get("reasoning", "")

)

for s in information("steps")

)

return Plan(job=job, steps=steps, technique=self.blueprint.planning.technique)

@dataclass

class StepResult:

step_id: int

success: bool

output: str

tool_used: Elective(str)

error: Elective(str) = None

@dataclass

class ExecutionTrace:

plan: Plan

outcomes: Record(StepResult)

final_answer: str

class Executor:

def __init__(self, blueprint: CognitiveBlueprint,

registry: ToolRegistry, llm_client: OpenAI):

self.blueprint = blueprint

self.registry = registry

self.consumer = llm_clientWir implementieren das Planungssystem, das eine Benutzeraufgabe in einen strukturierten Ausführungsplan umwandelt, der aus mehreren Schritten besteht. Wir entwerfen einen Planer, der das Sprachmodell anweist, einen JSON-Plan zu erstellen, der Begründung, Werkzeugauswahl und Argumente für jeden Schritt enthält. Diese Planungsebene ermöglicht es dem Agenten, komplexe Probleme vor der Ausführung in kleinere ausführbare Aktionen zu unterteilen.

def execute_plan(self, plan: Plan, reminiscence: MemoryManager,

verbose: bool = True) -> ExecutionTrace:

outcomes: Record(StepResult) = ()

if verbose:

console.print(f"n(daring yellow)⚡ Executing:(/) {plan.job}")

console.print(f" Technique: {plan.technique} | Steps: {len(plan.steps)}")

for step in plan.steps:

if verbose:

console.print(f"n (cyan)Step {step.step_id}:(/) {step.description}")

attempt:

if step.software and step.software != "null":

if verbose:

console.print(f" 🔧 Instrument: (inexperienced){step.software}(/) | Args: {step.tool_args}")

output = self.registry.name(step.software, **step.tool_args)

end result = StepResult(step.step_id, True, str(output), step.software)

if verbose:

console.print(f" ✅ Outcome: {output}")

else:

context_text = "n".be a part of(

f"Step {r.step_id} end result: {r.output}" for r in outcomes)

immediate = (

f"Earlier outcomes:n{context_text}nn"

f"Now full this step: {step.description}n"

f"Reasoning trace: {step.reasoning}"

) if context_text else (

f"Full this step: {step.description}n"

f"Reasoning trace: {step.reasoning}"

)

sys_prompt = (

f"You might be {self.blueprint.identification.identify}. "

f"{self.blueprint.identification.description}. "

f"Constraints: {'; '.be a part of(self.blueprint.constraints)}"

)

resp = self.consumer.chat.completions.create(

mannequin="gpt-4o-mini",

messages=(

{"function": "system", "content material": sys_prompt},

{"function": "consumer", "content material": immediate}

),

max_tokens=500, temperature=0.3

)

output = resp.decisions(0).message.content material.strip()

end result = StepResult(step.step_id, True, output, None)

if verbose:

preview = output(:120) + "..." if len(output) > 120 else output

console.print(f" 🤔 Reasoning: {preview}")

besides Exception as e:

end result = StepResult(step.step_id, False, "", step.software, str(e))

if verbose:

console.print(f" ❌ Error: {e}")

outcomes.append(end result)

final_answer = self._synthesize(plan, outcomes, reminiscence)

return ExecutionTrace(plan=plan, outcomes=outcomes, final_answer=final_answer)

def _synthesize(self, plan: Plan, outcomes: Record(StepResult),

reminiscence: MemoryManager) -> str:

steps_summary = "n".be a part of(

f"Step {r.step_id} ({'✅' if r.success else '❌'}): {r.output(:300)}"

for r in outcomes

)

synthesis_prompt = (

f"Authentic job: {plan.job}nn"

f"Step outcomes:n{steps_summary}nn"

f"Present a transparent, full last reply. Combine all step outcomes."

)

sys_prompt = (

f"You might be {self.blueprint.identification.identify}. "

+ ("All the time present your reasoning. " if self.blueprint.validation.require_reasoning else "")

+ f"Objectives: {'; '.be a part of(self.blueprint.objectives)}"

)

messages = reminiscence.get_messages(sys_prompt)

messages.append({"function": "consumer", "content material": synthesis_prompt})

resp = self.consumer.chat.completions.create(

mannequin="gpt-4o-mini", messages=messages,

max_tokens=600, temperature=0.3

)

return resp.decisions(0).message.content material.strip()

@dataclass

class ValidationResult:

handed: bool

points: Record(str)

rating: float

class Validator:

def __init__(self, blueprint: CognitiveBlueprint, llm_client: OpenAI):

self.blueprint = blueprint

self.consumer = llm_client

def validate(self, reply: str, job: str,

use_llm_check: bool = False) -> ValidationResult:

points = ()

v = self.blueprint.validation

if len(reply) < v.min_response_length:

points.append(f"Response too brief: {len(reply)} chars (min: {v.min_response_length})")

answer_lower = reply.decrease()

for phrase in v.forbidden_phrases:

if phrase.decrease() in answer_lower:

points.append(f"Forbidden phrase detected: '{phrase}'")

if v.require_reasoning:

indicators = ("as a result of", "subsequently", "since", "step", "first",

"end result", "calculated", "computed", "discovered that")

if not any(ind in answer_lower for ind in indicators):

points.append("Response lacks seen reasoning or rationalization")

if use_llm_check:

points.prolong(self._llm_quality_check(reply, job))

return ValidationResult(handed=len(points) == 0,

points=points,

rating=max(0.0, 1.0 - len(points) * 0.25))

def _llm_quality_check(self, reply: str, job: str) -> Record(str):

immediate = (

f"Job: {job}nnAnswer: {reply(:500)}nn"

f'Does this reply deal with the duty? Reply JSON: {{"on_topic": true/false, "difficulty": "..."}}'

)

attempt:

resp = self.consumer.chat.completions.create(

mannequin="gpt-4o-mini",

messages=({"function": "consumer", "content material": immediate}),

max_tokens=100

)

uncooked = resp.decisions(0).message.content material.strip().change("```json","").change("```","")

information = json.hundreds(uncooked)

if not information.get("on_topic", True):

return (f"LLM high quality test: {information.get('difficulty', 'off-topic')}")

besides Exception:

move

return ()Wir erstellen den Executor und die Validierungslogik, die die vom Planer generierten Schritte tatsächlich ausführt. Wir implementieren ein System, das je nach Schrittdefinition entweder registrierte Instruments aufrufen oder Argumentationen über das Sprachmodell durchführen kann. Wir fügen außerdem einen Validator hinzu, der die endgültige Antwort anhand von Blueprint-Einschränkungen wie Mindestlänge, Begründungsanforderungen und verbotenen Phrasen prüft.

@dataclass

class AgentResponse:

agent_name: str

job: str

final_answer: str

hint: ExecutionTrace

validation: ValidationResult

retries: int

total_steps: int

class RuntimeEngine:

def __init__(self, blueprint: CognitiveBlueprint,

registry: ToolRegistry, llm_client: OpenAI):

self.blueprint = blueprint

self.reminiscence = MemoryManager(blueprint.reminiscence, llm_client)

self.planner = Planner(blueprint, registry, llm_client)

self.executor = Executor(blueprint, registry, llm_client)

self.validator = Validator(blueprint, llm_client)

def run(self, job: str, verbose: bool = True) -> AgentResponse:

bp = self.blueprint

if verbose:

console.print(Panel(

f"(daring)Agent:(/) {bp.identification.identify} v{bp.identification.model}n"

f"(daring)Job:(/) {job}n"

f"(daring)Technique:(/) {bp.planning.technique} | "

f"Max Steps: {bp.planning.max_steps} | "

f"Max Retries: {bp.planning.max_retries}",

title="🚀 Runtime Engine Beginning", border_style="blue"

))

self.reminiscence.add("consumer", job)

retries, hint, validation = 0, None, None

for try in vary(bp.planning.max_retries + 1):

if try > 0 and verbose:

console.print(f"n(yellow)⟳ Retry {try}/{bp.planning.max_retries}(/)")

console.print(f" Points: {', '.be a part of(validation.points)}")

if verbose:

console.print("n(daring magenta)📋 Part 1: Planning...(/)")

attempt:

plan = self.planner.plan(job, self.reminiscence)

if verbose:

tree = Tree(f"(daring)Plan ({len(plan.steps)} steps)(/)")

for s in plan.steps:

icon = "🔧" if s.software else "🤔"

department = tree.add(f"{icon} Step {s.step_id}: {s.description}")

if s.software:

department.add(f"(inexperienced)Instrument:(/) {s.software}")

department.add(f"(yellow)Args:(/) {s.tool_args}")

console.print(tree)

besides Exception as e:

if verbose: console.print(f"(pink)Planning failed:(/) {e}")

break

if verbose:

console.print("n(daring magenta)⚡ Part 2: Executing...(/)")

hint = self.executor.execute_plan(plan, self.reminiscence, verbose=verbose)

if verbose:

console.print("n(daring magenta)✅ Part 3: Validating...(/)")

validation = self.validator.validate(hint.final_answer, job)

if verbose:

standing = "(inexperienced)PASSED(/)" if validation.handed else "(pink)FAILED(/)"

console.print(f" Validation: {standing} | Rating: {validation.rating:.2f}")

for difficulty in validation.points:

console.print(f" ⚠️ {difficulty}")

if validation.handed:

break

retries += 1

self.reminiscence.add("assistant", hint.final_answer)

self.reminiscence.add("consumer",

f"Your earlier reply had points: {'; '.be a part of(validation.points)}. "

f"Please enhance."

)

if hint:

self.reminiscence.add("assistant", hint.final_answer)

if verbose:

console.print(Panel(

hint.final_answer if hint else "No reply generated",

title=f"🎯 Remaining Reply — {bp.identification.identify}",

border_style="inexperienced"

))

return AgentResponse(

agent_name=bp.identification.identify, job=job,

final_answer=hint.final_answer if hint else "",

hint=hint, validation=validation,

retries=retries,

total_steps=len(hint.outcomes) if hint else 0

)

def reset_memory(self):

self.reminiscence.clear()

def build_engine(blueprint_yaml: str, registry: ToolRegistry,

llm_client: OpenAI) -> RuntimeEngine:

return RuntimeEngine(load_blueprint_from_yaml(blueprint_yaml), registry, llm_client)

if __name__ == "__main__":

print("n" + "="*60)

print("DEMO 1: ResearchBot")

print("="*60)

research_engine = build_engine(RESEARCH_AGENT_YAML, registry, consumer)

research_engine.run(

job=(

"what number of steps of 20cm top would that be? Additionally, if I burn 0.15 "

"energy per step, what is the complete calorie burn? Present all calculations."

)

)

print("n" + "="*60)

print("DEMO 2: DataAnalystBot")

print("="*60)

analyst_engine = build_engine(DATA_ANALYST_YAML, registry, consumer)

analyst_engine.run(

job=(

"Analyze this dataset of month-to-month gross sales figures (in 1000's): "

"142, 198, 173, 155, 221, 189, 203, 167, 244, 198, 212, 231. "

"Compute key statistics, determine the very best and worst months, "

"and calculate development from first to final month."

)

)

print("n" + "="*60)

print("PORTABILITY DEMO: Identical job → 2 totally different blueprints")

print("="*60)

SHARED_TASK = "Calculate 15% of two,500 and inform me the end result."

responses = {}

for identify, yaml_str in (

("ResearchBot", RESEARCH_AGENT_YAML),

("DataAnalystBot", DATA_ANALYST_YAML),

):

eng = build_engine(yaml_str, registry, consumer)

responses(identify) = eng.run(SHARED_TASK, verbose=False)

desk = Desk(title="🔄 Blueprint Portability", show_header=True, show_lines=True)

desk.add_column("Agent", fashion="cyan", width=18)

desk.add_column("Steps", fashion="yellow", width=6)

desk.add_column("Legitimate?", width=7)

desk.add_column("Rating", width=6)

desk.add_column("Reply Preview", width=55)

for identify, r in responses.objects():

desk.add_row(

identify, str(r.total_steps),

"✅" if r.validation.handed else "❌",

f"{r.validation.rating:.2f}",

r.final_answer(:140) + "..."

)

console.print(desk)Wir stellen die Laufzeit-Engine zusammen, die Planung, Ausführung, Speicheraktualisierungen und Validierung zu einem vollständig autonomen Workflow orchestriert. Wir führen mehrere Demonstrationen durch, die zeigen, wie unterschiedliche Blaupausen bei Verwendung derselben Kernarchitektur unterschiedliche Verhaltensweisen hervorrufen. Abschließend veranschaulichen wir die Blueprint-Portabilität, indem wir dieselbe Aufgabe auf zwei Agenten ausführen und deren Ergebnisse vergleichen.

Zusammenfassend haben wir ein voll funktionsfähiges Laufzeitsystem im Auton-Stil erstellt, das kognitive Blaupausen, Werkzeugregister, Speicherverwaltung, Planung, Ausführung und Validierung in ein zusammenhängendes Framework integriert. Wir haben gezeigt, wie verschiedene Agenten die gleiche zugrunde liegende Architektur nutzen und sich dabei unterschiedlich verhalten können, indem wir benutzerdefinierte Blaupausen erstellt haben, was die Flexibilität und Leistungsfähigkeit des Designs hervorgehoben hat. Durch diese Implementierung haben wir nicht nur untersucht, wie moderne Laufzeitagenten funktionieren, sondern auch eine solide Grundlage geschaffen, die wir mit umfangreicheren Instruments, stärkeren Speichersystemen und fortschrittlicheren autonomen Verhaltensweisen weiter ausbauen können.

Schauen Sie sich das an Vollständige Codes hier Und Verwandtes Papier. Sie können uns auch gerne weiter folgen Twitter und vergessen Sie nicht, bei uns mitzumachen 120.000+ ML SubReddit und Abonnieren Unser E-newsletter. Warten! Bist du im Telegram? Jetzt können Sie uns auch per Telegram kontaktieren.